LogRhythm leads with a customer-satisfaction approach in all that we do; that is one of the many reasons why we provide Analytic Co-Pilot Services. Our team works diligently to help customers improve security maturity through the implementation, use, and optimization of security analytics content and custom use cases.

Recap of Q3 2022 Analytic Co-Pilot use cases

Since our last quarterly update, things have not slowed down in the world of cybersecurity! To keep customers and the InfoSec community informed on our latest initiatives, this blog covers several examples of the Analytic Co-Pilot use cases we concocted in Q3 2022. Some of these derive from Knowledge Base modules, but most are custom use cases tailored to a client’s specific security needs. In this post, we will cover Analytic Co-pilot use cases that encompass:

- User and entity behavior analytics (UEBA)

- Endpoint detection and response (EDR)

- Cloud security

- Host threat detection

- Network detection and response (NDR)

Read on to learn how we deploy the use cases, as well as tune and test the rules. To understand the complexity of a use case and how it may apply to your environment, we provide a key at the start of each one to explain:

- Which module the use case aligns to

- The log sources required to successfully implement a use case

- Difficulty score to set up the use case and how complex it may be

- Impact rating to assess how a use case can positively impact threat monitoring

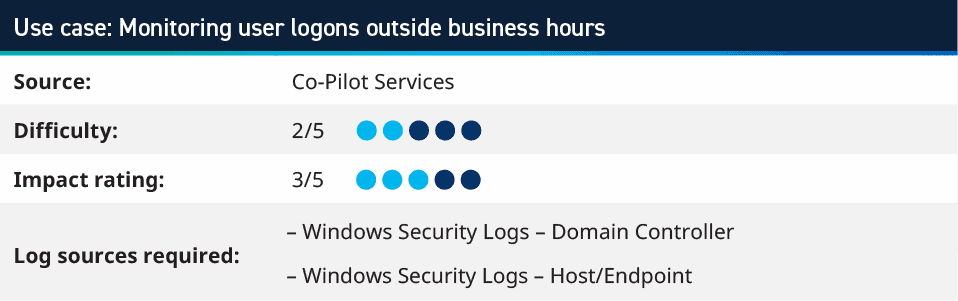

1. Monitoring user logons outside business hours

A simple, yet effective, rule to implement into your operations is monitoring which users are logging in, outside of business hours. By tracking Authentication Success and setting day and time criteria, you can monitor for any access that would be considered suspicious from users that are not expected to login outside of core company hours.

To tune this, look at those users that are logging in after hours and check the logon types. A user that has left their machine logged in would continue to generate logon type 3 (network) or 4 (batch), but you should not expect to see logon types 2 (interactive), 7 (unlock), or 10 (remote interactive). These would trigger alarms for you to investigate whether that user was accessing outside of hours and perhaps why.

Several customers have requested such alarms for their environment in the past few months, but it’s important to note that this alarm has a few dependencies based on working hours, expectation of working hours, flexi time, and more.

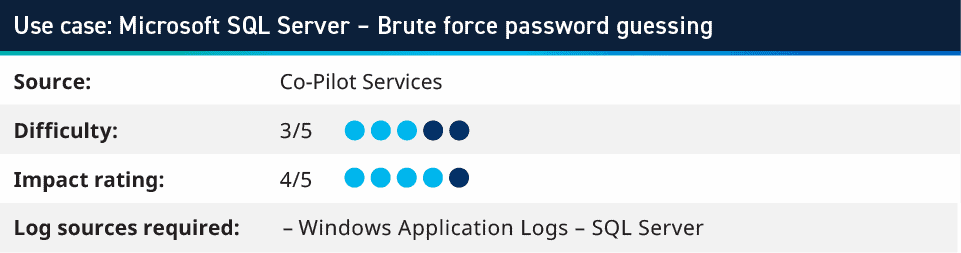

2. Microsoft SQL Server: Brute force password guessing

According to The DIFR Report, there has been an increase in the number of SQL Server brute force attacks seen. One of our senior Analytic Co-Pilot consultants took this report and reproduced the initial compromise within our lab environment to create a use case based on the brute force activity from the tools used. In this use case, you do not analyze typical security logs for authentication activity, rather you assess logs that SQL Server writes into the Application logs. That way you can track logins to the applications, and not just the operating system.

By recreating this activity, we discovered that building use cases pertaining to app logs can also provide great insights into brute force on the application layer and can enhance monitoring automated authentication attacks.

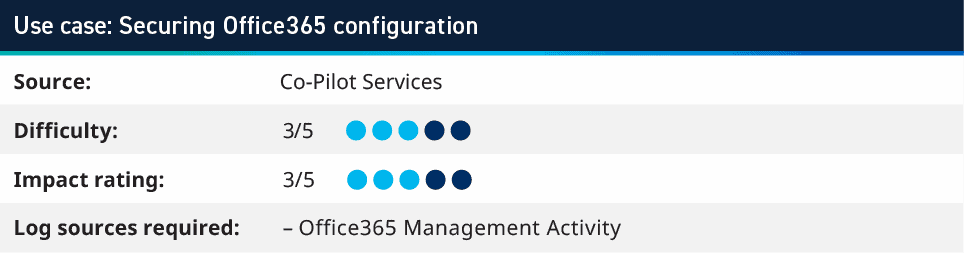

3. Securing Office365 configuration

When working with customers that have a single or few geographical locations, we can use Office365 Management Activity logs to monitor for any configuration activity that happens outside a set of expected IP addresses. Depending on an organization’s policies for remote working, VPNs, and configuring cloud systems, security teams may address this use case differently.

For example, one of my customers has restrictions where everything must be connected to a VPN; therefore, we can monitor to look for any configuration within O365 that comes from an IP address that is not within their public ranges. This helps identify administrators that are not following policy when working from home and not using a VPN. It also helped our customer detect a compromised user account that was used to modify O365 configuration from outside their corporate network.

Another customer is a national government agency. All their users and configuration are expected to happen from the country they operate in. This means that we can use GeoIP database that is included with our Knowledge Base updates to identify when any configurations (or even logins) happen from outside their expected geographical location.

When looking at configuration changes within O365 environments, there are occasionally logs which are internal configurations that Microsoft completed; however, these are from IP addresses which are published. This can be tuned out of the alerts by using an integration from the Community to import the Microsoft 365 IP addresses to a list and exclude them from the configuration logs.

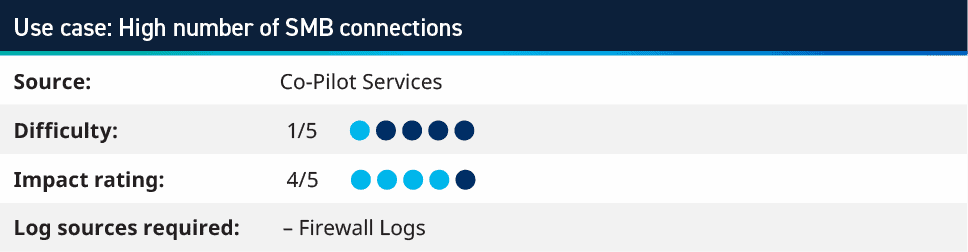

4. High number of SMB connections

This use case looks at all network classifications (allow and deny) where we are seeing a high number of Host Impacted. This typically means greater than one hundred different Host Impacted within one minute and where the Transmission Control Protocol (TCP) Port Impacted is 445 or the application is Server Message Block (SMB). Since SMB is more likely to be open across a network, rather than Internet Control Management Protocol (ICMP), we are monitoring for host scans on SMB, rather than ping scans using ICMP.

When implementing this use case, tuning is required. The typically tuning to this rule is to exclude your vulnerability scanners, connections from outside your network, and any other monitoring systems. With these exclusions in place, our customers have found penetration testers early on in their engagement, as well as finding a compromised host. The host was used to upload documents from external users and found it scanning a subnet where our main contact knew that only ten hosts existed on that range. When investigating further we found Mimikatz as well as other scripts being used from the compromised system.

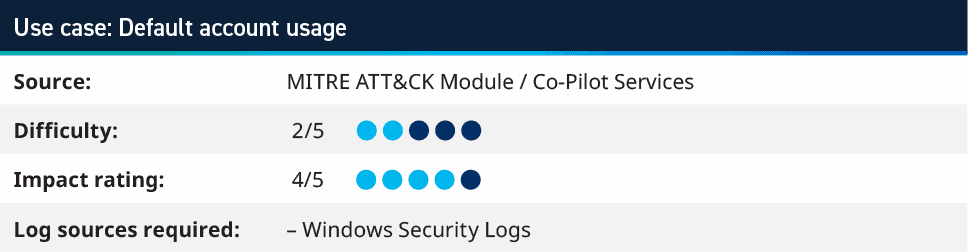

5. Default account usage

It is a well-known, established security standard that shared accounts shouldn’t be used within business-as-usual environments. They are great for break glass or initial setup, but using default accounts creates a gap of accountability where you can see that a “administrator” logged in, yet do not know who performed the action. The Default Account rules look for user activity using such accounts, so the first step is to build a list of default accounts for your environment. There are obvious ones such as Administrator and Guest; however, there are also many environments that will change the Windows built-in Administrator account name to something else. Adding these accounts, as well as accounts that may be used by applications or other systems, allows for tracking these.

Once you have the initial list of a default account (which should be reviewed and updated frequently), you can create a use case to look for any Authentication Success or Failure using these accounts. Creating an initial Saved Search for this can help identify where it may be already happening and allow for either exclusions to be added or investigations to stop the existing use of these credentials.

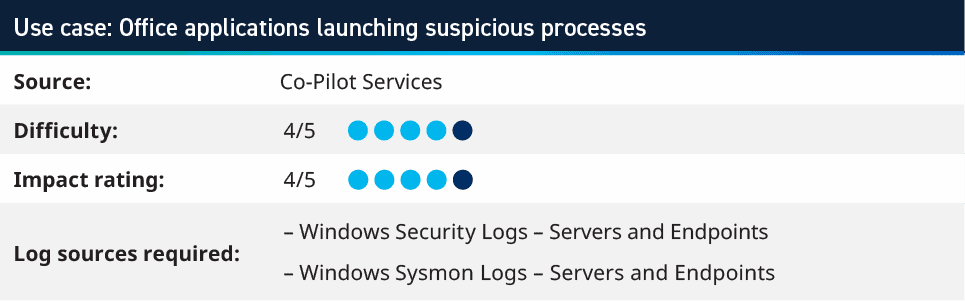

6. Office Applications Launching Suspicious Processes

Rooted from MITRE ATT&CK technique T1204.002 User Execution: Malicious File, this use case monitors for processes launching with Microsoft Office applications as the Parent Process. The rule usually requires endpoint logs to be sent to your security information and event management (SIEM), as Office applications are not typically installed on servers. The likelihood of that would be users opening these documents from email or downloaded files, meaning that the logs are on the user’s device.

The rule looks for the Parent Process Name as your office applications (Winword.exe, excel.exe, powerpnt.exe) and the Process Name is a list of processes that would not be expected to launch. The typical list uses things like cmd.exe, powershell.exe wscript.exe, cscript.exe, regsvr32.exe, wmic.exe, and svchost.exe; however, many more can be populated into the list.

If these processes are being launched from Office applications, it is an indicator that perhaps a Macro enabled document has been opened and launched malicious code; therefore, you should investigate the host to see what else has happened and drill down further.

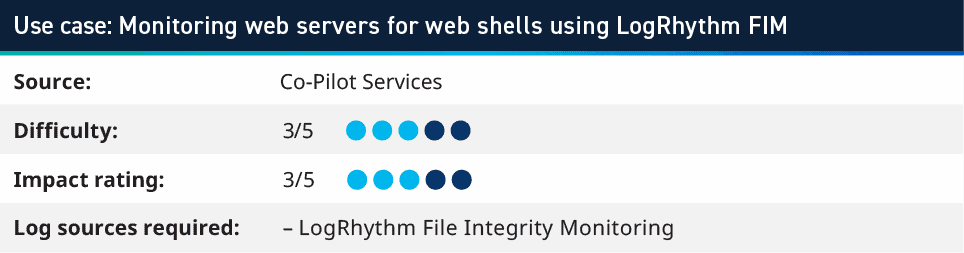

7. Monitoring web servers for web shells using LogRhythm FIM

Using the LogRhythm System Monitor File Integrity Monitoring feature, you can set up a policy to configure the agent to monitor the path to any websites on the server. In this example we are looking at Microsoft’s Internet Information Services (IIS), but this can be applied to other technologies. The policy would monitor the root directory for the server (typically c:/inetpub/wwwroot) and look for *.jsp, *.aspx, *.php and the action of Create or Modify. This will create a log whenever a new file within the website root is created with those file extensions. The extensions mentioned are typically used for web shell exploits; however, other extensions could be monitored, depending on the web server technologies being used.

An attack vector we’ve observed is when an exploit allowed for a file upload to the Web Server directory, and then the attacker executed the payload via a URL where that file was available within the website. This rule looks for new files created in a typically static environment. When dealing with environments that change frequently or have more complex configurations, work with the owners of the Web Servers to understand what is considered normal and how to look for anything falling outside of those parameters. For example, if there are a lot of modifications coming from a certain user, that account can be added as an exclude for this use case and monitoring for any other new files.

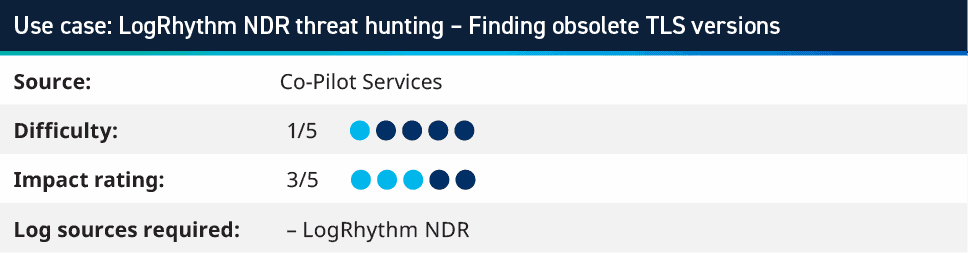

8. LogRhythm NDR threat hunting – finding obsolete TLS versions

LogRhythm NDR helps security teams gain visibility into many layers of the network traffic that is being seen on the wire. By taking this data we can monitor for an unusual or undesired network activity. As LogRhythm NDR stores the meta data for traffic, you can use this to look for SSL and TLS versions that are no longer considered secure. Since March 2021 TLS 1.0 and TLS 1.1 have been deprecated from the IETF via RFC 8996; therefore, you should monitor for any TLS connections that are using these older ciphers. This allows for system owners to ensure that the right cipher suites are enabled on their systems and that communications are protected from these known vulnerabilities. A search similar to the example below highlights those connections within your LogRhythm NDR environment.

tls_version:/.*/ AND -tls_version:”TLSv12″ AND -tls_version:”TLSv13″

This can also be adapted and used within your SIEM, depending on if the logs that are provided from Firewall or IDS/IPS include the TLS version that’s being used.

Learn more about Analytic Co-Pilot use cases

Our team is happy to share more use cases that we worked on with our Analytic Co-Pilot customers. Over this last quarter, we have had several customers focus on authentication and scanning-based activities, looking at SQL Server, Office365, and default account usage. This is always good practice, especially as new techniques are developed, new systems are implemented within your environment, or just to ensure that all assets are properly monitored.

To learn more about our Analytic Co-Pilot Services and how we can improve your threat detection and response, learn more here. If you are a customer and you have questions, reach out to your customer success manager or account team to get more information about how we can help with your use cases and analytics.